Table of Contents

Introduction

Digital food platforms in India have revolutionized access to meals, yet they have also made decision-making more complex for students. With thousands of menu items, images, and reviews available online, many users struggle to translate their cravings into precise search queries. Traditional platforms rely heavily on keyword matching, which often fails to understand intent, emotion, or context behind food choices.

An AI food recommendation system addresses this challenge by interpreting meaning rather than merely matching words. By combining natural language understanding, image analysis, and semantic search, such a system reduces cognitive overload and helps users discover food in a more intuitive way. Instead of forcing students to scroll endlessly or guess search terms, the system learns from descriptions, images, and past interactions to deliver smarter suggestions.

This approach aligns with how real people think about food: visually, emotionally, and contextually. Whether a user says “something warm and comforting” or uploads a photo from Instagram, the AI food recommendation system translates these signals into meaningful dish recommendations. The goal is to make food discovery not just faster, but also more enjoyable and personalized for young users in India.

Background and Motivation

For more than a decade, most food apps have depended on basic search algorithms such as TF-IDF or collaborative filtering. While useful, these techniques cannot grasp deeper culinary meaning. For example, a query like “rich winter soup” may miss dishes written in another language or described differently on menus. This limitation creates a major barrier for Indian college students exploring diverse cuisines.

Modern AI foundation models—especially Large Language Models (LLMs)—have changed this landscape. These models understand relationships between words, concepts, and images, enabling semantic interpretation of food preferences. When integrated into an AI food recommendation system, they allow users to search in natural language rather than rigid menu terms.

At the same time, vector databases such as FAISS have made real-time semantic retrieval possible. Instead of retrieving items that simply contain matching words, the system finds dishes that conceptually align with a user’s craving. This shift from keyword search to semantic food retrieval marks a major advancement in digital food discovery.

The motivation behind this project is to bridge three major gaps in existing platforms: difficulty in expressing cravings, inability to search using images, and lack of conversational memory. By integrating multimodal AI with vector search, the AI food recommendation system acts as a smart culinary assistant rather than a traditional search engine.

Problem Statement

Current food recommendation platforms face several technical and usability limitations that restrict meaningful discovery. First, traditional search engines operate at a lexical level, meaning they match words but not ideas. As a result, users may miss relevant dishes simply because of different wording on menus.

Second, most systems treat each query independently, failing to maintain conversational context. If a user asks for “pasta,” then says “something lighter,” and later “with seafood,” the system cannot connect these steps into a single evolving request. An AI food recommendation system overcomes this by retaining context across interactions.

Third, existing platforms are largely text-based, even though food is a visual experience. Users who see a dish online must struggle to describe it in words, often losing important details such as texture, plating, or ingredients. The proposed AI food recommendation system allows image-based search, reducing this friction.

Finally, critical dish information—such as nutrition, allergens, price, and preparation time—is often scattered across multiple screens. This fragmentation increases decision fatigue. By centralizing data and presenting it clearly, the system creates a smoother and more informed decision-making process.

Objectives of the Study

The primary objective is to design and implement a working prototype of an AI food recommendation system that integrates LLMs, vision models, and vector search technologies. This system aims to demonstrate how multimodal AI can enhance food discovery through intelligent retrieval and conversational interaction.

A structured data pipeline is developed to process menu datasets containing dish names, descriptions, images, and nutritional details. These records are cleaned and converted into vector embeddings using cloud-based AI services. A FAISS index enables fast similarity-based retrieval of relevant dishes.

The system also supports image-based input, allowing users to upload photos that are analyzed by a multimodal model. These visual insights are merged with any accompanying text query to create a richer search representation. Additionally, a conversational layer maintains session context so that the AI food recommendation system can refine suggestions based on user feedback over time.

A Streamlit-based user interface ensures accessibility and ease of use, making the system suitable for academic research and real-world experimentation.

Scope of the Project

The project delivers a fully functional prototype operating on a curated dataset of 1,000 to 5,000 menu items with images and metadata. The AI food recommendation system supports both text and image inputs, semantic search, and structured responses within a single session.

Basic error handling is included to manage issues such as missing images, API failures, or invalid inputs. Performance optimizations such as embedding caching and efficient index loading are also implemented. However, the system does not integrate live restaurant data, real-time pricing, or delivery tracking.

User authentication, long-term personalization, mobile app development, and multilingual support are excluded from scope. The system assumes stable internet connectivity and reasonably clean image data, as it is designed primarily for research and demonstration rather than commercial deployment.

Industry Relevance

Food delivery platforms like Swiggy and Zomato could integrate this AI food recommendation system as a premium visual search feature, allowing users to upload images or describe cravings naturally. This would likely improve engagement and reduce customer support inquiries.

Restaurant management systems could use the system to analyze menu similarities, detect inconsistencies, and generate richer dish descriptions automatically. Nutrition platforms such as MyFitnessPal could enable photo-based meal logging and suggest healthier alternatives using semantic matching.

Beyond food, the same architecture can be applied to e-commerce, travel, and social media shopping. For example, users could search for fashion items by uploading images or describe experiences they want while planning trips. This demonstrates the broad applicability of the AI food recommendation system beyond culinary use cases.

System Use Cases

Text-Based Discovery

Users describe their cravings in natural language, and the AI food recommendation system refines the query before performing semantic search to return ranked dish suggestions.

Visual Food Discovery

Users upload an image, which the system analyzes to extract key features before retrieving visually and conceptually similar dishes.

Conversational Refinement

The system retains context, allowing users to iteratively modify preferences such as “less spicy” or “more budget-friendly.”

System Architecture

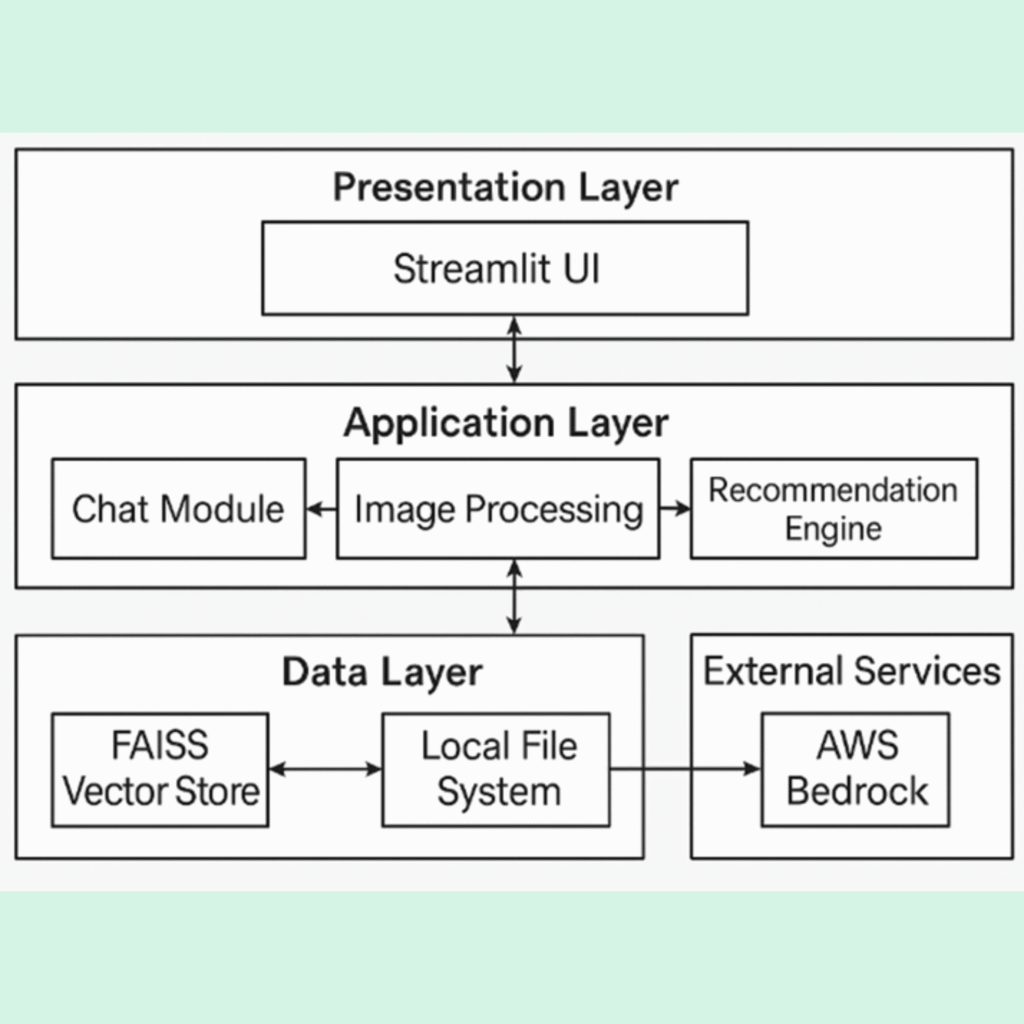

The system follows a layered architecture consisting of a Streamlit user interface, an application layer for processing, a vector database for storage, and cloud-based AI services. User inputs—text or image—are first processed, then converted into embeddings for semantic retrieval.

FAISS performs similarity search to identify relevant dishes, and an LLM generates structured, user-friendly responses. This pipeline ensures efficient, intelligent, and interactive food discovery through the AI food recommendation system.

Technology Stack

The prototype is built using Streamlit for rapid development, AWS Bedrock for AI model access, Amazon Titan for embeddings, and Claude for multimodal reasoning. FAISS enables high-speed vector search, while Python libraries handle image processing, environment variables, and logging. Together, these tools create a scalable and research-friendly AI food recommendation system.

Conclusion and Future Work

This research demonstrates that an AI food recommendation system significantly outperforms traditional keyword-based methods by integrating language, vision, and semantic search. The system improves precision, engagement, and user satisfaction through conversational interaction.

While scalability and cultural coverage need enhancement, the prototype provides a strong foundation for next-generation food discovery platforms. Future work includes long-term personalization, multilingual support, real-time restaurant data integration, and advanced dietary filtering.

As AI continues to evolve, multimodal and conversational systems like this will play a crucial role in transforming digital commerce and everyday decision-making.

What is an AI Food Recommendation System?

An AI food recommendation system is an intelligent platform that suggests dishes based on user intent, images, and context rather than simple keyword matching.

Key features include:

Understanding natural language cravings

Processing food images for visual search

Using vector similarity search for semantic matching

Maintaining conversational memory

Presenting structured dish information (price, nutrition, rating)

This makes food discovery faster, smarter, and more personalized.

How does an AI food recommendation system work?

It analyzes text, images, and past interactions using AI models and vector search to suggest relevant dishes.

Can it understand food images?

Yes, it uses vision-language models to extract features from uploaded food photos.

Is it better than normal food apps?

Yes, because it understands meaning rather than just matching keywords.

Does it remember my preferences?

It maintains context within a session but does not store long-term personal data in this prototype.

Can restaurants use this system?

Yes, for menu analysis, description generation, and customer engagement.

Will it work offline?

No, it requires internet access for AI processing.